The Senses of Machines:

From Perception to Intelligence

In brief

The senses of machines show that applied intelligence does not begin with decision-making, but with perception. Cameras, LIDAR, thermal sensors, object recognition, and internal monitoring allow machines to capture their surroundings, navigate them, interpret them, and learn from them. In China, this sensory layer stands out not only for the technical level of its components, but for the way it is integrated into broader technological and industrial ecosystems, where perception, software, industrial edge systems, manufacturing, and continuous improvement reinforce one another. Understanding that convergence is essential to any strategic analysis of China for Europe.

Introduction

For a long time, we tended to imagine machines as bodies without a world: structures capable of carrying out movements, but not of truly perceiving what was happening around them. That image is now outdated.

Over these years living in China, one of the things I have observed most clearly is that the evolution of robotics does not depend only on more precise arms, more sophisticated hands, or more advanced control systems. It also depends, increasingly, on the ability of machines to receive information from the outside world, interpret it, and act accordingly. In other words: on their senses.

This text is not intended to provide an exhaustive analysis of the senses of machines, but rather an introduction to some of the technological layers that make them possible and the industrial logic within which they are embedded.

A machine does not only act: it also perceives

A machine cannot navigate an environment well, avoid obstacles, recognise an object, inspect a facility, or interact with a person unless it first has a sensory layer capable of translating the world into processable signals. Just as in a living being perception comes before action, in a machine useful execution begins with an understanding of the environment.

That is why, when we speak about the senses of machines, we are not speaking only about sensors as technical parts. We are speaking about the bridge between the system and reality. About the layer that turns light, heat, distance, volume, movement, sound, pressure, or vibration into actionable information.

Machine vision: an introduction

Let us broaden the idea of “vision.” A machine may incorporate a conventional optical camera, partly comparable to the logic of a human camera or a surveillance camera. But it can also integrate other types of imaging systems that provide other types of information: thermal cameras, infrared sensors, LIDAR systems, ToF cameras, and other sensors such as microphones or distance-measurement systems.

In my analyses and reports on the Chinese technological ecosystem, one idea comes up repeatedly: these technologies do not simply see. They interpret. They detect patterns, recognise objects and scenes, calculate depth, anticipate trajectories, identify thermal anomalies, and help the machine decide its next move. And perhaps what matters most is not each of these “abilities” in isolation, but the convergence between all of them.

That is one of the reasons why artificial perception has become such a strategic layer within China’s technological and industrial ecosystems.

LIDAR, thermal or infrarred:

different ways of seeing

Each of the systems mentioned above provides a different way of reading the environment.

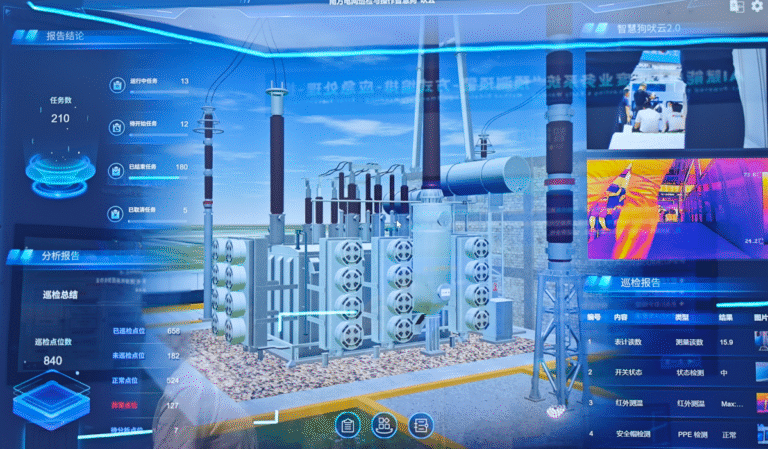

LIDAR, for example, does not “see” like a traditional camera. It emits pulses of light and measures their reflection to generate a cloud of spatial points. This makes it possible to calculate distances, changes in level, obstacles, or volumes with enormous precision, even in conditions of low visibility. Over the years, I have been able to observe how this kind of perception is key to industrial drones, autonomous vehicles, patrol robots, infrastructure inspection, precision agriculture, and navigation in warehouses and ports.

Thermal cameras, for their part, make it possible to detect heat, energy anomalies, or presence in contexts where visible images are not enough. Infrared systems extend that reading even further in difficult conditions.

And when these layers are combined with visual recognition, AI, and navigation software, the machine no longer simply records the world: it begins to build an operational interpretation of it. We can understand this convergence of technologies as a way of giving “judgment” to the robotic body, anticipating its next step, adjusting its behaviour, and preventing errors.

Recognising an object

is more than just seeing it

A camera on its own does not guarantee understanding. What matters is whether the system is able to identify what it is seeing, interpret scenes, calculate context, and assign relevance to what it perceives. A traffic light is not just a coloured shape. A pipe is not just a line. A pedestrian, a gas leak, a crack, a defective part, or a weed in a field are not just images: they are events that must be properly identified for the machine to act as it should.

That is why, as can be seen in the Chinese ecosystem, the senses of machines tend to combine physical perception with algorithmic interpretation. The point is not just to capture more data, but to organise it better. That is one of the keys to technological deployment at scale: turning perception into operational judgment.

key idea

The senses of machines do not simply capture the environment: they turn reality into the capacity to navigate, adjust, and learn.

External vs internal perception

Here a distinction appears that I find especially useful. A machine’s senses do not look only outward. They can also turn inward, towards the inside of the system itself.

In my analyses, I often distinguish between external perception and internal monitoring. The first makes it possible to orient the machine within its environment: optical and thermal cameras, LIDAR, microphones, or distance sensors help it navigate, identify people or objects, map spaces, and avoid collisions. The second deals with the functional state of the machine itself: pressure, vibration, temperature, voltage, wear, leaks, or internal anomalies.

This matters a great deal, because a truly useful machine must not only know where it is moving; it must also know what condition it is in. In that sense, and much like in living beings, the sensory layer does not function only as eyes and ears. It can better be compared to a nervous or visceral system that detects failures, anticipates breakdowns, and allows corrections before the problem becomes critical.

From perception to navigation

When that sensing layer is well integrated, the machine can navigate much more effectively.

It can calculate depth, recognise obstacles, correct trajectories, identify unexpected changes, adjust speed, or stop if it detects risk. It can patrol a complex environment, move through a factory, inspect an electrical substation, travel through a tunnel, enter a fire zone, assess a crop, or assist in rescue tasks.

What matters is not only physical navigation. It is functional navigation. A machine that is well equipped at the sensory level does not just move better: it also understands better what is in front of it and what it needs to do with it.

Data is not wasted, it´s accumulated

This is where the subject gains real depth. The data captured by cameras, sensors, and recognition systems is not only useful for resolving an immediate action. It can also be stored, compared, cross-referenced, and processed to improve the future behaviour of the system.

A trajectory corrected today can serve to optimise tomorrow’s. An anomaly detected on a production line can feed predictive maintenance models. A navigation pattern in a warehouse can help reorganise routes, timing, and resources.

Put differently: perception does not only allow reaction. It also allows learning.

And when this logic is deployed at scale, sensing stops being a simple aid for one specific machine and begins to become an infrastructure of efficiency.

When one analyses the Chinese technological and industrial ecosystem, this pattern appears with great clarity: intelligence and control are being integrated ever more directly into machines and systems, often with the ability to adjust almost immediately, reducing friction and closing the loop more effectively between observation, decision, and iteration.

What this reveals about China

I am well aware that none of this is exclusive to China. Machine vision, LIDAR, thermography, and object recognition exist and are being developed in many other countries as well. But in China, two things tend to stand out with particular clarity: the scale of deployment and the capacity for integration.

It is not only a matter of more advanced sensors, but of how they are articulated within a broader execution architecture. An architecture capable of connecting perception, software, industrial edge systems, manufacturing, technical services, sector-specific applications, and continuous improvement with very little friction.

That is one of the reasons why, when analysing China, it is not enough to look at the final product. One has to read the ecosystem that makes it possible to deploy, adapt, and scale it quickly.

That is why I like to describe the Chinese industrial ecosystem as a dense, modular structure designed to recombine industrial functions and accelerate the transition between innovation, integration, and real-world use.

More than just robots

This sensory layer is not limited to visible robots.

In China, I have also observed industrial machines, infrastructure, and non-robotic systems equipped with sensors that make them monitorable, adaptable, and connected. And that points to an important idea: intelligence does not reside only in the robot. It can also be distributed across traditional machines, logistics networks, energy installations, production lines, urban spaces, or critical infrastructure. In my analyses, I often emphasise that in many cases, improving what already exists within a system through sensors is faster and more efficient than replacing it entirely.

That is where this subject stops being a purely technical matter and begins to have much broader implications: industry, energy, agriculture, logistics, medicine, defence, security, infrastructure maintenance, or public administration.

A new layer of perception

At its core, this is one of the most interesting transformations that can be observed in China today. Machines are no longer just bodies that carry out orders. They are systems that perceive, interpret, and adjust their behaviour according to what they detect. And the denser that layer of perception becomes, the closer we move to an environment in which efficiency will depend not only on having better machines, but on capturing, interpreting, and recording reality more effectively.

In the end, all applied intelligence begins there: in the quality of its senses.

A final idea

At its core, the senses of machines do not only expand what a machine can see, measure, or recognise. They also expand what an industrial system can learn, adjust, and optimise. Because when a machine perceives its environment and its own condition more effectively, it no longer merely executes: it begins to generate useful information to improve processes, reduce friction, and make decisions with greater precision. That is where this issue stops being purely technical. The senses of machines are not only a condition of autonomy; they are also one of the foundations of new industrial capability.

If you are interested in exploring these technologies and the ecosystem that sustains them, contact us.